The Illusion of "Too Much": Why a 2-Million Token Context Window is Smaller Than You Think

In the world of LLM-driven engineering, there is a pervasive myth: that a 2-million token context window is an excessive "ocean" of data that no reasonable application could ever fill.

Engineering teams often look at that number and assume it’s a luxury. In reality, when you move from simple text retrieval to complex system reasoning, 2 million tokens isn’t a luxury—it’s a tight budget.

The Math of Complexity: Why Functions Aren't Just Text

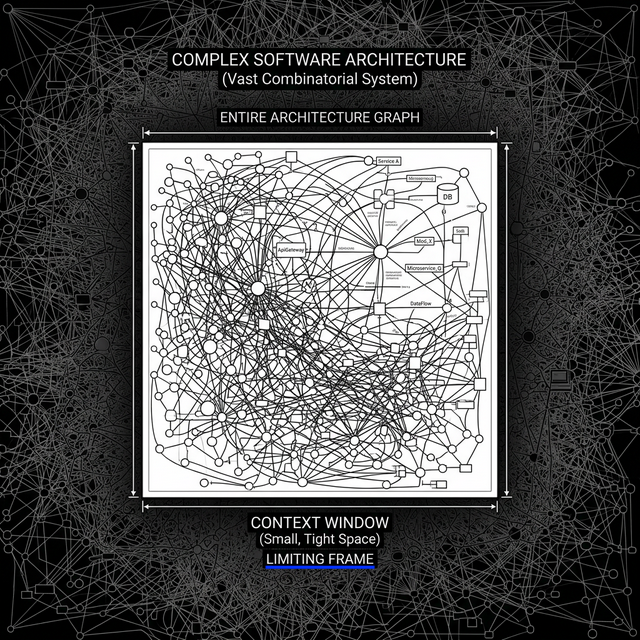

When we talk about a "very small app," we might be thinking of a few thousand lines of code. But an LLM isn't just reading code as a flat file; it’s trying to understand relationships.

If you ask an AI to reason about a codebase, you aren't just feeding it strings. You are feeding it a Graph.

1. The Proliferation of Metadata

To make an LLM "reason" effectively, we don't just send the raw source code. We send:

- Abstract Syntax Trees (ASTs): The structural map of the code.

- Dependency Graphs: How Function A interacts with Class B.

- Documentation & Comments: The intent behind the logic.

- State Information: Variables, types, and execution flow.

2. The Multiplier Effect of "Degrees of Separation"

Consider a modest microservice with just 50 functions and classes. In a vacuum, that might only be 50,000 tokens. However, once you map the nodes (functions) and edges (calls/dependencies), the volume explodes.

If you want the model to understand the impact of a change at a 5-degree depth (e.g., "If I change this utility function, how does it ripple through the API layer, the auth middleware, the database wrapper, and finally the client response?"), the context grows exponentially.

| Component | Estimated Tokens (Raw) | Tokens with Contextual Metadata |

|---|---|---|

| Single Function | ~500 | ~2,500 (incl. signatures & docs) |

| 50-Function App | 25,000 | 125,000 |

| 100 Nodes / 500 Edges | N/A | ~1,200,000+ |

When you include the Relationship Mapping—the description of how every one of those 500 edges behaves—your "small app" has already consumed over half of your 2-million token window.

The "Possibility Explosion"

The real "context killer" isn't the data itself; it's the combinatorial explosion of possibilities.

In a system with 500 edges, the number of potential execution paths is astronomical. To ask an LLM to debug a race condition or a logic flaw across a 5-degree connection requires the model to hold the "state" of all those potential paths in its "active memory" (the context window).

The Reality Check: A 2-million token window is roughly equivalent to a few thick textbooks. While that sounds like a lot, try fitting the entire technical documentation, the source code, the Jira history, and the architectural diagrams of a modern enterprise project into three books. You'll run out of pages before you reach the "Testing" chapter.

Why "Too Big" is a Dangerous Misconception

When engineering teams believe the window is "too big," they stop optimizing. They assume they can just "dump everything in." This leads to:

- Lost Focus: Even with 2 million tokens, "Lost in the Middle" phenomena can occur where the model misses nuances in a sea of data.

- Efficiency Debt: Teams fail to build intelligent RAG (Retrieval-Augmented Generation) structures, assuming the window will save them.

- Scale Paralysis: When the app grows from 50 functions to 500, the system breaks because the "dump it all in" strategy hit a hard ceiling.

Final Thought

A 2-million token context window isn't a vast territory to explore; it’s a high-resolution snapshot. It allows us to see the "forest" and the "trees" simultaneously for the first time, but we are still looking at a very small grove in a much larger digital jungle.

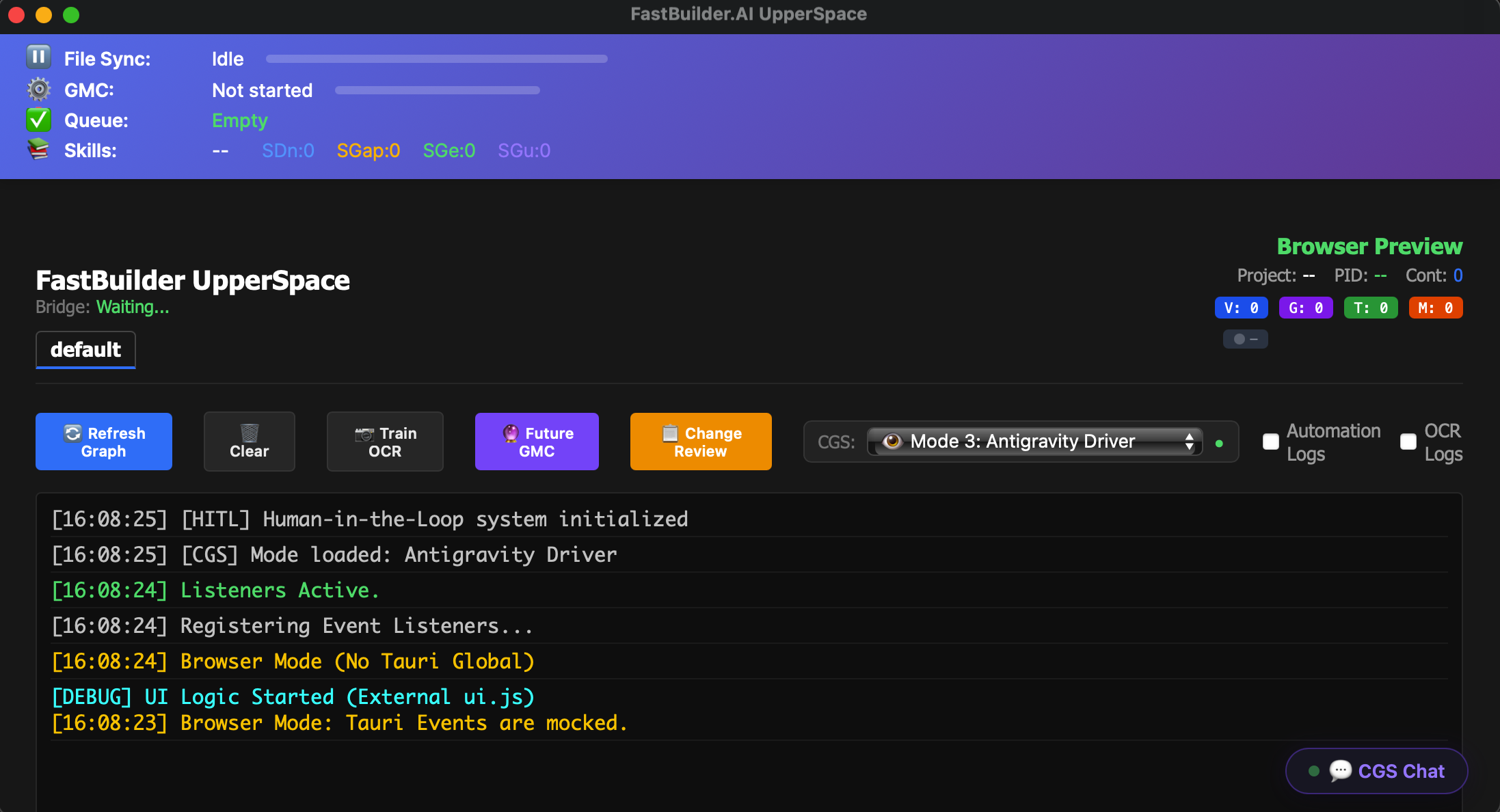

Navigating Billions of Tokens: How FastBuilder.AI Stops Hallucinations and Saves Hundreds of Dev Hours

The AI coding revolution promised us 10x engineering speed. But for many teams, that promise quickly turns into a nightmare of debugging "AI hallucinations" and burning through massive token budgets. When your dev agents lose context across a sprawling codebase, you aren't saving time—you're just shifting the work from writing code to untangling AI-generated messes.The root cause? Relying on massive context windows to understand entire architectural graphs is fundamentally flawed. Dumping millions of tokens into an LLM and hoping it maintains architectural integrity across billions of possible combinatorial paths is a recipe for disaster.

The Solution: A Token-Free Architecture Orchestrator

At FastBuilder.AI, we took a radically different approach. Instead of trying to force massive codebases into expensive, brittle context windows, we built a Token-Free Architect Agent.Think of it as the air traffic controller for your automated development workflows.

Our Architect Agent doesn't read your entire repository as a flat text file. It understands your system topology, dependencies, and architectural intent natively. It then uses this deep, structured awareness to orchestrate your individual dev agents, guiding them through billions of equivalent tokens of context without actually consuming those tokens in an LLM call.

How It Works: IDE & MCP Integration

Whether your team relies on locally hosted agents in their IDEs or centralized agents via the Model Context Protocol (MCP), FastBuilder.AI acts as the unified guiding layer:- Contextual Precision via IDE: When an agent attempts to write a new feature in the IDE, our Architect Agent intervenes dynamically. It provides the exact structural constraints and dependency rules needed for that specific task.

- System-Wide Alignment via MCP: For enterprise-wide tools and backend automation, the Architect Agent ensures every agent operates with a shared, hallucination-free understanding of the system's "True Component Signatures" and topology.

The ROI: Saving 100s of Wasted Hours

By sitting above the fray and orchestrating the agents, the FastBuilder Architect Agent delivers two massive wins for engineering teams:- Zero-Hallucination Architecture: Dev agents are guided with absolute precision. No more phantom libraries, conflicting logic, or architectural drift.

- Drastic Cost Reduction: By eliminating the need to shove millions of tokens into context windows just to maintain basic system awareness, you stop "breaking the bank" on API calls.

Stop managing AI chaos. Start orchestrating architecture.