How to Prevent AI Code Hallucinations: The Complete Engineering Guide

AI code hallucinations — when an AI model generates plausible-looking but incorrect code — cost engineering teams an estimated $2.1 billion in debugging time in 2025. From fabricating non-existent API endpoints to inventing library functions that don't exist, hallucinated code passes code review because it looks correct even when it fundamentally isn't.

What Are AI Code Hallucinations?

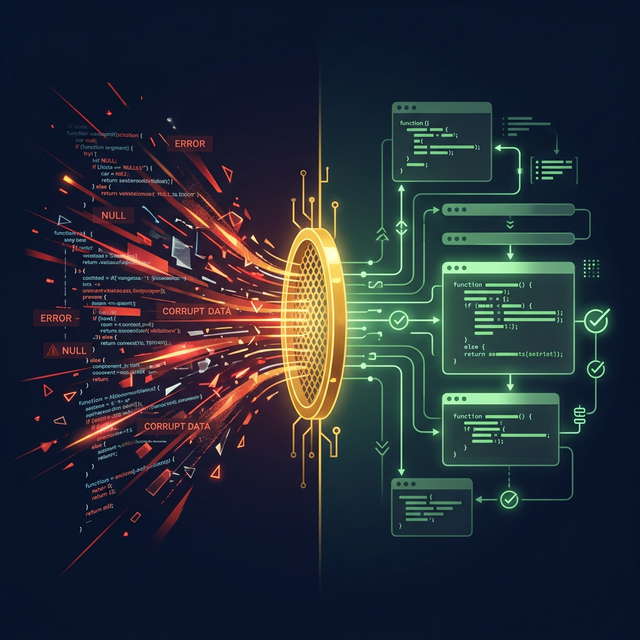

An AI code hallucination occurs when a large language model generates code that references APIs, functions, libraries, or patterns that do not exist in the target codebase or ecosystem. Unlike human errors, hallucinations are structurally plausible — they follow correct syntax and naming conventions while being semantically wrong.

Common Types of Code Hallucinations

- Phantom APIs: Calling methods that don't exist on a class or module

- Version Confusion: Using syntax from a different version of a library

- Pattern Fabrication: Inventing design patterns that aren't used in the codebase

- Import Hallucination: Importing packages that don't exist or have different names

- Interface Mismatch: Generating function signatures that don't match the actual API contract

Why Traditional AI Tools Hallucinate

Traditional AI code generation tools like GitHub Copilot and Cursor use probabilistic token prediction. They predict the most likely next token based on training data patterns. This approach fundamentally cannot guarantee correctness because it lacks a ground truth model of the specific codebase being edited.

The Topological Verification Approach

FastBuilder.AI eliminates hallucinations through topological verification — a mathematical approach that maps every component, function, data flow, and event in a codebase into a verified Golden Mesh. Every generated code fragment is validated against this mesh before it reaches the developer.

How CBFDAE Prevents Hallucinations

The CBFDAE framework (Components, Blocks, Functions, Data, Access, Events) creates a complete topological map of a codebase:

- Components are validated as independent architectural units

- Blocks are verified as coherent groups of related functions

- Functions are checked against actual API contracts and signatures

- Data flows are traced end-to-end to prevent phantom references

- Access controls ensure generated code respects authorization boundaries

- Events are verified against the actual event bus topology

5 Practical Steps to Reduce Hallucinations Today

- Constrain context windows: Provide only relevant files, not entire repos

- Use type-aware tools: TypeScript and strict typing catch many hallucinations at compile time

- Implement CI/CD gates: Automated testing catches hallucinated imports and APIs

- Adopt formal verification: Tools like FastBuilder.AI mathematically verify correctness

- Review against architecture docs: Compare generated code against documented system design

The Cost of Not Addressing Hallucinations

Engineering teams that don't address AI hallucinations face compounding technical debt. Each undetected hallucination becomes a latent bug that surfaces unpredictably — often in production, always at the worst time. The solution isn't to stop using AI; it's to verify AI output against a deterministic model of your codebase.